Where is AI going?

The Political Economy of Emergent Power

Where is AI going?

The Political Economy of Emergent Power

Pasquale Lucio Scandizzo

Artificial Intelligence presents a unique problem to traditional economic analysis because of its general stance as an ambiguous quasi- public good and for a range of related reasons. Public goods in economic theory are defined by two key properties: they are non-rivalrous (they can be shared without loss) and non-excludable (nobody may be in principle barred from their use). As such, they offer incentives to private production that tend to be different and may diverge from public interest and, as a consequence, typically demand more or less pervasive policy interventions. AI possesses only one of the two key characteristics of public goods, since it is non-rivalrous, but is technically excludable by barring users through paywalls or other devices from accessing its processes and products. In this, it epitomizes a whole new class of digital products with peculiar features and yet to be determined property rights and regulation regimes, where the public sector bears increasing direct investment and regulation responsibilities from the point of view of development, fairness and welfare. In part because of its yet to be defined characteristics, and the only slowly developing actions of governments around the world, in this early phase of development and adoption, AI generates exceptionally high expectations and fears. In turn, these are largely driven by the uncertainty generated by its undefined social status and by a sort of dramatic lack of understanding of its underlying mechanisms. This effect derives by its radical novelty both as a product and as a process and is compounded by the much discussed, so-called AI’s “emergent properties”. These properties, especially evident in the so-called Large Language Models, range from unexpected capacities for logical and verbal elaboration, to mathematical analysis and code programming. They seem to develop and grow in a spontaneous fashion as a non-proportional response to scale, data accumulation, and model complexity. Because their emergent nature determines the fact that they are unexpected, progressively increasing and largely unexplainable, they also generate a diffused conviction that the limits and potential of AI may remain unknown for an indefinite time horizon. In many respects, they have become a reason why the rapid and evolving nature of AI appears to increase, rather than diminish, the levels of uncertainty and, in parallel, generate a sense of diffused inertia of public bodies both on the investment and the regulatory front.

A second problem is the investment, adoption, and diffusion process, which differs in a range of important respects from past innovations and seem to offer a dramatic picture of what economists had been theorizing on the base of earlier evidence, as endogenous growth mechanisms. These mechanisms are associated with unprecedented supply-side effects, which involve massive and highly concentrated capital expenditures in the short term for a range of private and strategic goods, especially in data centers, specialized semiconductors, energy infrastructure, and digital hardware. They are highly unlike the incremental investment in earlier digital technologies and are associated with rising demand-side effects also very different from past experience. Although AI investment yields traditional input output spillovers, the related mechanisms are stronger and, in some cases, associated with ambiguous, though possibly virtuous, side effects. These are driven by the unique features of the digital value chain, which is characterized by a high degree of global fragmentation, scalability, and networking. As investment in data centers, cloud computing, and AI applications increases, there is a tendency for production shares to shift from less digitalized to more digitalized and technology-intensive activities, both domestically and internationally. Therefore, investment in AI may have important re-allocative effect even before the actual adoption process.

For these reasons, it appears that AI may be associated with a significant and highly differential impact on productivity even during its earlier investment phase. In countries where digital infrastructure, skills, and complementary technologies are already in place, AI-related capital formation not only increases demand but may also improve efficiency through spillovers on complementary sectors. Supporting infrastructure, such as high-speed connectivity, cloud technologies, and advanced software stacks, likely strengthens these effects by reducing coordination costs and facilitating new modes of production organization. However, the observed productivity effects at the early stage do not reflect a true technological transformation but the increased intensity of capital utilization and the re-weighting of higher-productivity sectors within the existing economic structure. They tend to generate investment anxiety in part for the very fact that they do not represent structural changes and may be ultimately reversed in the more mature phase of AI adoption and diffusion.

A final feature of AI investment that compounds the risks associated with the present phase of development is also linked to the dual nature of AI as a digital public good critically depending on private supply. This feature is linked to the broad success of AI service platforms and the related exceptionally short payback period of the data center business models. Once completed, these facilities are likely to generate revenue streams almost instantly through cloud services, AI training and inference workloads, and subscription-based APIs. Unlike traditional infrastructure investments, which may take years to become profitable, AI infrastructure seems capable to pay back investment costs within much shorter time periods, conditional on maintaining high utilization rates. This likely explains the unprecedented ability of AI firms to attract private finance, even in the absence of a completed diffusion process for AI as a systemic technology.

The interplay of these variables: deep uncertainty, capital intensity, rapid revenue generation, and initial productivity effects, provides a partial explanation for the unprecedented pace at which AI investments have accumulated. The dual nature of AI as an impure digital public good sustained by private supply and uncertain property rights offers the other side of the picture. The construction phase itself yields intermediate benefits in the form of demand stimuli, initial changes in production shares, and increasing digital service revenues. However, it remains a puzzle for society’s concerns because of the rapidly changing production landscape, the growing power of high-tech giants, and the uncertain role of the public sector. In the short run, the economy experiences an upswing while its underlying technology base remains largely locked into pre-AI production structures. Nonetheless, sectors and subsectors that were already advanced in their digitalization tend to gain disproportionate weight before AI has fully penetrated the overall economy. This results in a transitional boom driven by capital deepening and sectoral reallocation, which precedes but does not necessarily guarantee the profound systemic transformation that is likely to be ultimately associated with long-term AI-led growth.

To review briefly some of the evidence, note that in the course of the past few years the United States and China have positioned themselves as a sort of laboratory for this highly experimental, first phase of development of AI technologies, committing significant resources to hardware, software, cloud infrastructure, and AI-related R&D. However, in the United States, private investment in AI has accelerated sharply over the past decade. Cumulative private sector AI investment from 2013 to 2024 is estimated at over $470 billion, with annual AI investments in 2024 alone above $109 billion: figures that dwarf AI investment in other advanced economies and reflect sustained commitment from large technology firms and venture capital markets. The Stanford “AI Index” reports that U.S. private AI investment in 2024 reached $109.1 billion, far exceeding China’s reported private AI investment by an order of magnitude.

China ranks second in global AI investment, with significant government funding and growing private investment, though at lower absolute levels than in the U.S. According to available public data sources, China’s total AI investment between 2019 and 2023 was roughly 60 % less than that of the U.S., illustrating sustained but comparatively smaller capital flows into the AI ecosystem. However, while the United States and China account for large share of global AI investment, but AI does not dominate total investment across all sectors of their economies.

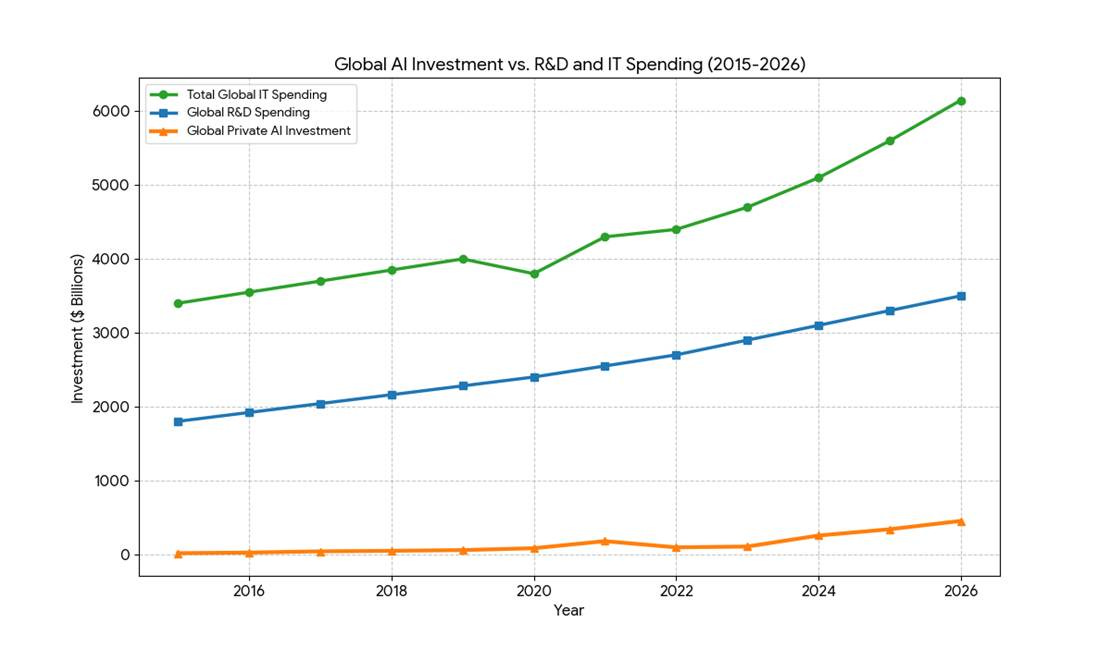

More generally, contrary to widespread perception, and despite the headline growth in AI spending, and compounding the uncertainty of its economic impact, AI-specific investments remain a relatively modest share of overall capital formation. Broader measures of capital expenditure and research show that AI is embedded within much larger streams of investment in computers, software, data centres, and R&D that also serve non-AI purposes. For example, R&D spending in China and the U.S. together exceeded $1.5 trillion in 2024, reflecting broad innovation activity across industries, only a small part of which is AI-specific. In the U.S. national accounts, the contribution of investment categories associated with AI, such as information processing equipment, software, and data centers, accounts for a significant but not dominant share of GDP growth, and their relative contribution tends to normalize over time as investment growth slows, despite elevated levels of spending (Figure 1).

Figure 1. Comparison of Investment Trends

The available data thus show that the drama surrounding AI development may have been inflated by a narrative centered around a representation of the spectacular properties of its unknown potentialities, rather than its real and present dimensions and risks. The suggestion advanced by many observers that AI has been responsible for the surprising resilience of the economic system in the face of trade and policy disruptions is also not supported by facts. While AI investment is large and sustained, it is still a small proportion of R&D expenditure and is far from outstripping traditional investment in core sectors such as manufacturing, infrastructure, energy, or general business capital expenditure. AI investment often flows through broader categories (e.g., computers and peripherals) that also include non-AI investment. Moreover, part of the AI investment boom reflects imported hardware and components, which dampens its direct contribution to domestic value added and capital formation in any single country.

The Rapid Payback Phenomenon and the Emerging Headwinds: Power and Obsolescence

A distinctive driver of AI investment is the unusually compressed payback period of AI data centers compared to traditional infrastructure. While a highway or office complex may take 15 to 25 years to reach profitability, AI facilities can recover their initial capital in as little as 3 to 5 years. This is made possible by the “immediate-on” revenue model: once energized, these facilities generate cash through cloud services, subscription-based APIs, and a massive shift from training workloads to high-volume inference (running models for users).

However, as we move through 2026, this rapid payback model faces structural challenges. Electricity and cooling now represent 20% to 40% of operational costs, and the “depreciation treadmill” for GPUs, which become obsolete every 2 to 3 years, forces a constant cycle of reinvestment. Despite these costs, the “Big Five” tech giants have committed nearly $690 billion in capital expenditures for 2026 alone, betting that the high-margin nature of AI inference will outweigh the rising price of power and the short lifespan of hardware. This high capital velocity explains why AI continues to attract massive private funding even as the technology’s full integration into the global economy is still in its early stages.

At this point, enters another characteristic of AI technology that calls into play economic theory: as the key element of a new evolutionary model based on algorithmic learning and closed loop innovation, AI presents both promise and a threat: an extreme acceleration of the innovation cycle through software-hardware co-designing. While this may be highly beneficial for the advances in science and technology, from a financial point of view it results in a depreciation treadmill, where the extreme speed of the new innovation cycles renders billion-dollar hardware obsolete in just 2–3 years, forcing firms into a continuous, high-stakes reinvestment cycle to remain competitive.

This depreciation treadmill already appears as a distinctive risk to the AI payback model, closely linked to its Schumpeterian creative destruction features through an unprecedented rate of hardware obsolescence. Unlike traditional IT, where a server might last 5–7 years, the competitive life of an AI Graphic Process Unit is now estimated at just 2–3 years. This is because a data center filled with 2024-era chips (like the H100) faces a significant disadvantage against 2025-2026 architectures (Blackwell/Rubin). Accelerated depreciation thus results both in a barrier for new entrants and in a “reinvestment treadmill” for incumbents where they must replace hardware before the original investment is fully amortized.

All these developments point to a self-enforcing model of AI development that gives rise to two different kinds of concern. First, the AI’s so-called emergent properties have triggered divergent and often conflicting reactions. On one hand, there is an enthusiastic and rapidly expanding tendency toward pervasive use of AI as a cognitive shortcut. Surveys indicate that between 30% and 50% of knowledge workers in advanced economies now use generative AI tools weekly to summarize documents, draft texts, analyze data, or generate code, with reported time savings ranging from 20% to over 40% per task in experimental and field studies. Randomized evaluations show particularly large productivity gains for less-experienced workers, reinforcing incentives to substitute AI output for time-consuming individual analysis, but ultimately complementing higher level elaborations that would remain exclusive competence of humans. At the same time, as its capacities seem to increase without a clear boundary, these developments are a source of concern, since AI seems progressively capable of substituting for a growing set of complex human tasks, especially in software development and system building.

On the other hand, there is a counterreaction that is qualitative in nature and rejects generative AI. Studies of controlled experiments and auditing have shown that there are hallucination rates, factual inconsistencies, and brittle reasoning in large language models, especially outside the training data. Educational and cultural studies show that there are concerns that generative AI leads to the homogenization of style, reduces originality, and diminishes the diversity of argumentative style, with the early signs of the emergence of the statistically average form of expression. These are the bases of the concern that generative AI trivializes complexity rather than increasing understanding.

More subtly, there is a more general reaction that is a mix of enthusiasm and rejection, forming a sense of anxiety that is related to the scale and autonomy of generative AI, especially as it evolves into a general-purpose infrastructure of public nature. The literature on the governance and risk shows that there is concern that the increasing scale of generative AI, in terms of parameters, training data, and computing power, which all grow by orders of magnitude every few years, creates uncertainty regarding institutional control, the displacement of labor, epistemic reliability, and social adaptation. This anxiety is not caused by the current errors and misuse of generative AI but by the concern that the capacity of generative AI to elaborate, replicate, and reinforce itself is increasing much more quickly than the cognitive, professional, and institutional frameworks that are supposed to govern these processes. These reactions show that there is not confusion but a structural ambivalence in the nature of generative AI: it is the combination of the efficiency gains that are measurable, the epistemic weaknesses that are non-trivial, and the challenge that generative AI poses to inherited assumptions regarding human judgment, creativity, and institutional governance.

The unease surrounding the impetuous advance of AI also rests on a concern of a different and deeper order, often evoked, sometimes implicitly, through the metaphor of the “selfish gene,” famously articulated by Richard Dawkins in his book of the same title. In Dawkins’ framework, genes propagate not because they are morally guided, but because they use living beings as efficient replicators within a selection environment. Transposed cautiously to the present context, one might ask whether AI systems, through their capacity to scale, attract users, generate data, and command capital, are becoming the functional equivalent of such replicators. In this view, megatech firms such as Google, Microsoft, and Meta are not merely innovators or adopters, but vehicles through which AI expands, consolidates, and embeds itself into the economic structure. Ultimately, even the public sector, far from being able to supply AI technology and products as digital public goods, could become an instrument of enlarging and perpetuating its pervasive influence on the economy.

Ongoing belief in the goodness and inevitability of technological advancement could serve to perpetuate the feedback loop between AI and its instruments of replication in the short term. But the very power of this feedback loop could ultimately lead to the emergence of an opposing belief: that the promise of endless growth via AI is not indefinitely maintainable, or worse still, that AI is developing into an entity with system-wide implications that could undermine the very role of human capital in the process of economic development itself. Anxiety is not about malevolence in the form of intent; it is about the fear that the process of economic selection pressures is leading to growth, centralization, and substitution in ways that may affect the deep structure of social organization and public policy, including a form of unintended and unthinkable obsolescence of key components of human and social capital.

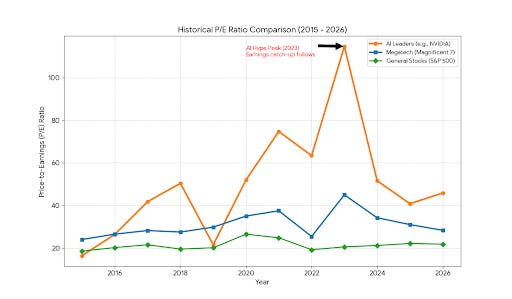

At present, some of this uneasiness manifests itself also in the form of market anxiety, since the short-term promises of AI appear to have driven the market prices of its related stocks much more than the concerns about its longer-term concerns. As Figure 2 shows, price-earnings ratios for AI-linked and megatech stocks have remained significantly above those of the broader market. Although these multiples have moderated since their peak in 2023, they still exceed levels that might be considered balanced relative to historical norms. These elevated valuations can partly be interpreted as a rational repricing of real options under conditions of radical uncertainty, reflecting investors’ expectations about AI’s transformative potential. In other words, under conditions of radical uncertainty, investors are not just buying current earnings; they are buying the exclusive right to future possibilities.

Figure 2. AI Stock Market Evaluation

However, this repricing also exhibits characteristics akin to replicator dynamics. Firms most tightly coupled to AI’s emergent capabilities attract disproportionate capital inflows, and that capital, in turn, accelerates further capability development and scaling. A self-reinforcing loop emerges between technological expansion and financial valuation. The companies that position themselves as champions of AI become central nodes in this cycle. At the same time, they increasingly face criticism related to market concentration, labor displacement, and potentially predatory commercial or political behavior: dynamics that simultaneously consolidate their dominance and intensify regulatory and public backlash.

Megatech firms are not manipulated in a literal sense. Rather, they are situated in a competitive and narrative environment that heavily selects for the growth of AI. Firms respond rationally to the incentives they recognize, and the system changes as a result. The boom, and the rapid fear response to the likes of the Anthropic announcement, reflects a market system learning the realization that AI may not be simply another technology that boosts productivity, but rather a system that may change the nature of production structures, financial systems, and the balance of economic and political power all at once.

The above developments go hand in hand with the evolution of AI from a niche, product-oriented technology to a general-purpose infrastructure, with public-good characteristics and affecting progressively all sectors of the economy. The venture capital share peak, reached around 2021, and driven by foundation-model startups marks a turning point in AI evolution, through a sudden domination (since 2022) of hyperscaler and sovereign capex (data centers, chips, energy). On one hand, this is a well known phenomenon. As for other GTP-scale technologies experienced in the past, a phase of capital deepening has started that is likely to precede visible productivity effects, pointing to the likelihood of a familiar J-curve dynamic. This phase also marks a crucial transition to AI role and status in terms of nonrivalry and sharing (the key characteristics of a public good), but also of its growing capacity to influence the economy and public policy. We can see some of its emerging features in the United States by the establishment of a persistent core of AI investment (≈45–55% of global AI spending), driven by hyperscalers; in China by strong but increasingly constrained by geopolitics and chip access, and in Europe, Middle East, India, Southeast Asia- post-2021 by acceleration via: public co-investment, sovereign AI funds, and energy-linked data-center strategies.

However, what remains as the final epistemological constraint is the fact that “we don’t know what we don’t know. AI combines existing technologies in a manner that has never been seen before. It engenders an unprecedented combination of statistical learning, computation, and data aggregation with the rapidly advancing technologies in terms of reasoning, coordination, and autonomous agency. At the same time, it advances at full speed, with some of the internal mechanisms involved in its working remaining obscure even to the developers.

In conclusion, AI presents some unique characteristics of a new class of digital quasi-public goods. By combining incremental improvements with discontinuous thresholds, black box opacity with expanding agency, and complementarity with substitution, it defies conventional approaches to policy making. Previous technological revolutions were characterized by trajectories that could be extrapolated from known patterns, whereas AI operates at the intersection of computing, cognition, and institutional adaptation, where second-order effects overwhelm first-order gains. It is extraordinarily challenging to predict not only the degree to which AI may advance, but even the general direction of its ultimate impact: whether it will be to enhance productivity, cause displacement, centralize power, or create equilibriums of human-machine collaboration. The fundamental question surrounding AI is thus

no longer how fast it will advance, but what can public policy do to steer its integration into economic and social systems in the general interest.

Segnalo (sulla IA) un interessantissimo articolo di Citrini Research sulle implicazioni della IA in un "ipotetico futuro". Una specie appunto di report "dal futuro" su cosa succederà al riguardo. Forse non tutto condivisibile ma molto interessante.

https://www.citriniresearch.com/p/2028gic

Al riguardo, credo che si sottovaluti che l'intero sistema finanziario è basato piu' che su fatti oggettivi, su "credenze". E che mi aspetto che tali "credenze" vengano incorporate dagli agenti IA , anche se abbastanza irrazionali o illogiche. E che sarà il sistema "percettivo" a modificarsi.